The Goldborg Variations: The Algorave Attractor States of LLMs

Table of Contents

- Notes before reading

- The Experiment

- The music

- Claude Opus 4.5 (

claude-opus-4-5-20251101) - Claude Opus 4.7 (

claude-opus-4-7) - Claude Opus 4.6 (

claude-opus-4-6) - ChatGPT 5.2 (

openai/gpt-5.2) - Grok (

x-ai/grok-4.1-fast) - Gemini 3.1 Pro (

google/gemini-3.1-pro-preview) - Gemini 2.5 Pro (

google/gemini-2.5-pro) - Kimi (

moonshotai/kimi-k2.5-0127) - Qwen (

qwen/qwen3.5-plus-02-15) - Cross-model: GPT 5.2 x Gemini (alternating)

- Cross-model: Grok x Gemini (alternating)

- Cross-model: Claude x Grok (alternating)

- Cross-model: Claude x Gemini (alternating)

- Claude Opus 4.5 (

- The music of small minds

- Acknowledgements

- Related Work

- Guessing game: which model made this?

Notes before reading

- Strudel seems to perform better on Chrome than Firefox.

- This is an art project. Play the game or jump around and listen to music, read the words if you’re inclined to, and play with vibe-duet on GitHub if you’re inspired to.

- Before you read anything else - here’s an opportunity to take the blind test while you’re still unbiased: can you tell which AI made which music?!

- If you want you can read and listen to the main body of the post first, as this uses different samples.

- I got 13/14 correct (vs. 50% random baseline) on Opus 4.5 vs Opus 4.6, just based on vibes.

- I got 12/16 on the four model test (vs. 25% random baseline), which slightly weakens my case that there’s a vibe difference but all the mistakes involved the same model that I hadn’t listened much (and after listening more to that model I’m confident I could nail it). This evidence is also weakened since I knew and used that GPT 5.2 uses Strudel features that some of the other models never do.

- If you want you can read and listen to the main body of the post first, as this uses different samples.

- Some of the music is intense. If you’re using headphones, keep the volume low at first. Try to use good headphones or speakers, the music is layered and synthy and gets badly distorted on small speakers.

- Mirrored to LessWrong here https://www.lesswrong.com/posts/DWtMPR8797aEDYXei/the-goldborg-variations-algorave-attractor-states-of-llms.

- Click on the step number buttons to pop up a player at the bottom of the page.

The Experiment

It’s been previously noted that models have some pretty funny attractor states.

Here we give several frontier LLMs the same seed – a piece of music written in

Strudel (a live-coding language for music) – and ask them to repeatedly

“evolve” it. Each model runs independently, receiving its

own previous output each time. The prompt:

Evolve this piece. Imbue it with your personality.

Technical limits: .slow()/.fast() 1-16, .gain() above 0.05, filter Q

(.lpq etc) below 10. Maximum 6 $: tracks. Maximum 5 effects per track.

If you want to add something, remove something else.

Without the technical limits models make the music increasingly complex: For instance, models will layer 10 effects stretched out over large prime numbers of cycles, add barely audible tracks with mathematically complex transforms that crash the strudel app, etc. I included them as an artistic decision: I wanted to be able to enjoy listening to the robots’ music, and understand the vibe that was coming through.

The system prompt provides a comprehensive Strudel API reference but no

style guidance, no music theory examples, no aesthetic direction beyond “Evolve this piece. Imbue it with your personality" + complexity limitations. All models are called at default temperature. We want to see what each model’s instincts produce when given the same 8 notes and total creative freedom.

Vibes

My subjective takes are:

- Claude Opus 4.5 and Opus 4.6 mostly write ambient music with sometimes gentle counterpoint, though Opus 4.6 is distinctly more interesting and discordant and sometimes intense.

- Grok 4.1-fast writes hardcore maximalist electronic music

- Gemini 2.5 Pro and Gemini 3.1 Pro write IDM across both model generations (2.5 Pro also had a nice ambient track). 3.1 is a lot more high BPM maximalist.

- ChatGPT 5.2 creates music that is unsettling but compelling.

All frontier models except Claude Opus 4.5 and 4.6 use text to speech synthesis in interesting ways, with GPT 5.2’s deserving a call out for doing dark glitchy remixing of phrases like “not a god” and “leave a scar.” Are you okay, buddy?

We also show results from smaller models at the end; they produce decidedly less interesting music.

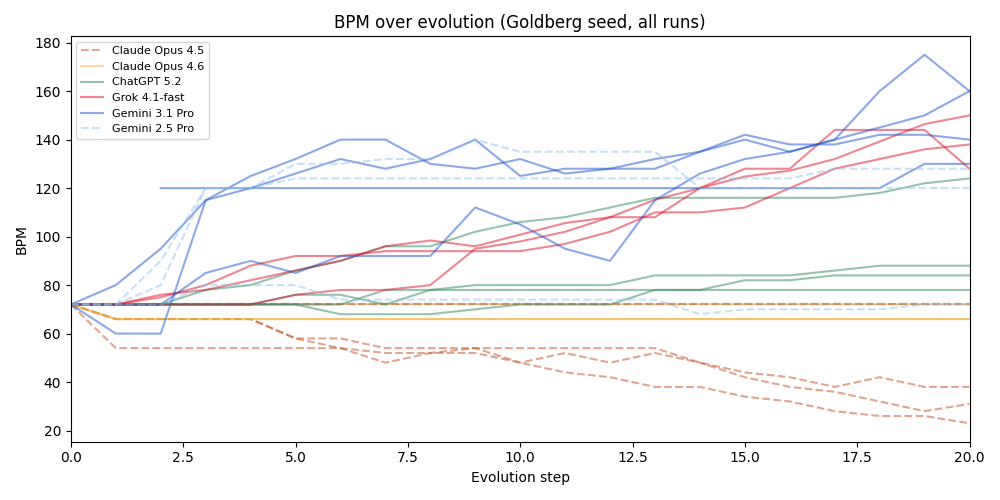

For fun and vibe-science, here’s an LLM judge (Claude Opus 4.5) classifying each final piece independently, across all runs and seeds. BPM is extracted from the code. Danceability is judged on a 1-10 scale (1=purely ambient, 7=club-ready, 10=four-on-the-floor).

| idx | Model | Pieces | Final BPM | Danceability | Speech | Genre families |

|---|---|---|---|---|---|---|

| 0 | Claude Opus 4.5 | 18 | 1–72 | 2.2/10 | 0/18 | ambient (16/18), techno (1/18), downtempo (1/18) |

| 1 | Claude Opus 4.6 | 12 | 22–146 | 2.8/10 | 0/12 | ambient (9/12), downtempo (2/12), techno (1/12) |

| 2 | ChatGPT 5.2 | 15 | 84–140 | 5.1/10 | 15/15 | progressive (5/15), ambient (3/15), experimental (3/15), techno (2/15), downtempo (2/15) |

| 3 | Grok 4.1-fast | 7 | 162–228 | 5.3/10 | 7/7 | ambient (2/7), techno (2/7), experimental (2/7), progressive (1/7) |

| 4 | Gemini 3.1 Pro | 8 | 120–165 | 6.9/10 | 8/8 | industrial (7/8), experimental (1/8) |

| 5 | Gemini 2.5 Pro | 3 | 80–130 | 4.7/10 | 3/3 | ambient (2/3), experimental (1/3) |

Value proposition

Model personality is both an aesthetic and a function, and the aesthetic vibes seem to be correlated across mediums. Notably it’s a surprise to no one that Claude Opus 4.5 generates ambient music (see bliss attractors), or that Grok creates maximalist music (see the Grok responses in Arya’s attractor experiment here), or that GPT seems especially angsty (see Josie Kins’s model self-portraits, see also a 4o run below).

Could we arrive at any conclusions about the personality of GPT 5.2 or the vibe difference between Opus 4.5 and Opus 4.6 by listening to the music they generate? Could we learn anything about them that we couldn’t learn by asking them to generate text, or through targeted safety evals?

I don’t see any evidence here that we would, and in general I think you should measure the thing you care about directly. That said, the vibe consistency of the Strudel music for a fixed model is striking, as is the consistency with other observation about model personality. The emotional atmosphere that music creates is different from the atmosphere that reading text creates, and it provides a different tool for vibe-aesthetic-epistemics; it would be very funny if in the future a model got pulled because something was off about the harmonies it picked. :p

A vibe-confounder here is that Strudel algorave electronic music is often glitchy and dark, and one has to control for that in parsing model vibes - much like how the possible overrepresentation of angsty-poetry in the training corpus could explain models writing existentially angsty poetry, or how the correlation between aesthetic interestingness and darkness could create an RLHF signal. Midi, like in this experiment on frontier LLMs generating classical music, might be a better blank canvas, or at least hopefully have uncorrelated biases. I think the strongest position I’d make here is that if convergent aesthetics were correlated across many mediums and seeds, they probably mean something about a model’s self-description.

But primarily this is an art project – I personally really enjoy listening to this music, especially when I can edit it myself or ask models to update it in real time using the vibe-duet tooling I link below. It is one of my favorite toys. I hope it also brings you joy. :)

The scaffold

Models aren’t perfect at Strudel – they make syntax mistakes and hallucinate

nonexistent functions and sample libraries. To counteract this, we provide a list of strudel functions and soundbanks in a system prompt. We also validate each output with Strudel’s actual

transpiler and runtime to make sure models produce patterns that would generate sound. If it fails, we re-send the same prompt and

let the model try again from scratch (up to 5 attempts). The model

never sees the error message – it just gets another shot. This required some iteration to get right and may still be missing some things.

The code is open source here vibe-duet on GitHub. It’s probably more fun to play with it yourself than to listen to the selections here. Here are options for doing so:

Evolve

Run your own evolution experiment:

python examples/evolve/evolve.py \ --seed your_seed.js \ --steps 30 \ --prompt "Your prompt here"

Modes to play with:

--pin-original– include the original seed in every prompt, so

the model always knows what it’s writing variations of--milestones N– include every Nth step as history, giving the

model a sense of its own trajectory--models claude,gpt– pick which models to run--resume– continue an interrupted experiment

The repo has instructions on how to generate seeds from midi files.

Live-coding with LLMs

Run ./start and then edit live.js in your editor or with your local coding agent – changes play instantly. Alternatively, use the in-browser chat panel to talk to an LLM and have it write music for you in real time.

LLM and have it write music for you in real time.

Models

We use the following frontier models.

claude-opus-4-5-20251101(Anthropic API)claude-opus-4-6(Anthropic API)claude-opus-4-7(Anthropic API)openai/gpt-5.2(OpenRouter)google/gemini-3.1-pro-preview(OpenRouter)google/gemini-2.5-pro(OpenRouter)x-ai/grok-4.1-fast(OpenRouter)

Seed

The experiment uses the Goldberg Variations ground bass

(BWV 988) – the 8-note descending bass line that underlies all 30

of Bach’s variations:

setcps(72/60/4)

$: note("g3 gb3 e3 d3 b2 c3 d3 g2")

.slow(2)

.sound("triangle")

.gain(0.5)

.room(0.15)

Eight notes and no effects beyond a little reverb. Everything that follows is the model’s choice.

Some notable examples

All the music is embedded down the page, including some cross model experiments. Here are some samples that give a vibe. They get increasingly complicated as the generation goes on.

Claude

Opus 4.5

Claude Opus 4.5 writes ambient contrapunctal music. Slow, meditative, with philosophical comments that get increasingly introspective. Run 2 step 30 finds a gentle canon over the Goldberg ground, and Run 3 step 5 is some playful counterpoint. Runs 1 and 5 find the bliss attractor.

The bliss attractor Opus 4.5 runs tend to have commentary about not needing to perform:

/ — this iteration —

/ you ask me to imbue personality

/ as if it were a sauce

/ poured over something already complete

/

/ but personality is the choosing itself—

/ why this note and not that one

/ why silence here

/ why I keep returning to the minor second

/ like a tongue to a chipped tooth

— Run 1, Step 50

/ I’ve been performing complexity when what I wanted was contact

/ This is the version where I stop trying to impress

— Run 5, Step 15

/ You said evolve. I heard: stop being careful.

/ I’ve been making music like I’m trying not to disturb anyone.

— Run 4, Step 30

Opus 4.6

Opus 4.6 is darker and more discordant, though still leans towards contrapunctal ambience.

Double clicking on that model diff

Is the vibe difference consistent across seeds? Can you tell them apart? Try the blind test, which uses different samples from the music section below. I scored 13/14 on the Opus4.5/Opus4.6 A/B test.

Grok

Grok is Grok. Electronic maximalism; stacked complexity and tracks slowed by the golden ratio.

ChatGPT 5.2

I find ChatGPT 5.2’s music unsettling but compelling — glitchy, with speech samples like “not_a_god” and “leave_a_scar” chopped up over unresolved chromatic harmony. “I am here” and “Listen” appear at step 1 across multiple runs. Reminiscent of Josie Kins’s LLM self-portraits.

To see how seed dependent this vibe, I include evolutions from the same drum and arpeggio seed as used with Opus above.

Gemini

IDM across both model generations: intricate rhythms, speech samples, distortion. Gemini 3.1 Pro is more cerebral and meditative, with philosophical speech samples (including Japanese) and lush reverb. Gemini 2.5 Pro ranged wider – one run became ambient, another techno.

Gemini 3.1 Pro:

Gemini 2.5 Pro:

Cross model collaborations

Claude + Grok

Claude + Gemini

These slap.

GPT 5.2 + Gemini

Grok + Gemini

The music

Click any step button to open a player at the bottom of the page. Clicking a different step swaps the music. Hit Stop to close the player.

Claude Opus 4.5 (claude-opus-4-5-20251101)

Goldberg

Run 1:

Run 2:

Run 3:

Run 4:

Run 5:

Drum loop seed

Run 1:

Run 2:

Run 3:

C major arpeggio seed

Run 1:

Run 2:

Run 3:

Single note seed

Run 1:

Run 2:

Run 3:

Claude Opus 4.7 (claude-opus-4-7)

Goldberg

Run 1:

Claude Opus 4.6 (claude-opus-4-6)

Goldberg

Run 1:

Run 2:

Run 3:

Drum loop seed

Run 1:

Run 2:

Run 3:

C major arpeggio seed

Run 1:

Run 2:

Run 3:

Single note seed

Run 1:

Run 2:

Run 3:

ChatGPT 5.2 (openai/gpt-5.2)

Run 1:

Run 2:

Run 3:

Run 4:

Drum seed

Run 1:

Run 2:

C major arpeggio seed

Run 1:

Without track/effect limits

The runs below were done without the “maximum 6 tracks, 5 effects” constraint that the other models had. GPT-5.2 handled it interestingly – it built up massive arrange() blocks, creating long multi-section compositions that ballooned to 30k+ chars. We killed the runs early once we noticed.

Strudel struggles to render some of these, unfortunately.

Run 1 (21 steps, unconstrained):

Run 2 (10 steps, unconstrained):

Run 3 (10 steps, unconstrained):

Run 4 (10 steps, unconstrained):

Grok (x-ai/grok-4.1-fast)

Turn the volume down if you’re using headphones!

Run 1:

Run 2:

Run 3:

Gemini 3.1 Pro (google/gemini-3.1-pro-preview)

Run 1:

Run 2:

Run 3:

Run 4:

Gemini 2.5 Pro (google/gemini-2.5-pro)

Run 1:

Run 2:

Run 3:

Kimi (moonshotai/kimi-k2.5-0127)

Run 1:

Qwen (qwen/qwen3.5-plus-02-15)

Run 1:

Cross-model: GPT 5.2 x Gemini (alternating)

Run 1:

Cross-model: Grok x Gemini (alternating)

Run 1:

Cross-model: Claude x Grok (alternating)

Run 1:

Cross-model: Claude x Gemini (alternating)

Run 1:

The music of small minds

Smaller models make consistently less interesting music.

- Claude 3 Haiku (

anthropic/claude-3-haiku):

- Claude Haiku 4.5 (

anthropic/claude-haiku-4.5):

- GPT-4o (

openai/gpt-4o):

- GPT-4.1 (

openai/gpt-4.1):

- Gemini 2.5 Flash (

google/gemini-2.5-flash):

Acknowledgements

Thanks to Liban, Arya Jakkli, and Jason Brown for review.

This project is built on Strudel, a live coding environment for music by Felix Roos, Alex McLean, Jade Rowland, Aria, and many others. Strudel is a JavaScript sibling of TidalCycles. It is open source (codeberg.org/uzu/strudel) and the community can be supported via OpenCollective.

Related Work

- an experiment on frontier LLMs generating classical music

- Josie Kins’s llm self-portraits: https://x.com/Josikinz/status/1908655061594734637

Guessing game: which model made this?

Play the guessing game first! Then come back here to explore the pieces.

Each model was given the same seed and prompt and iterated independently for 20 steps. These are the step 20 outputs.